Gravity wells and disillusionment troughs: A Practical guide to Agentic Engineering

or: How I Learned to Stop Worrying and Love the Claude

NOTE: This is a series on my education with AI-driven software development. I'm starting with why, for those cynical, suspicious, or ambivalent about AI, as I was only 2 months ago. Go straight to Part 2 if that’s not you, and you’re here for how. And if you already know all the how's, and just want to know my specific workflow, jump straight into Part 3. Footnotes are clickable. I feel obligated to add: this was written by a human, for other humans. If your instinct is to ask an AI model to summarize this post, it is not for you.

But first, a warning: This series is for software professionals who already have skills experience and judgement that AI and agentic engineering is affecting. If you are a new grad, or still in school, I think you should still read it, but at some point you will hit a moment when you realize “this is not for me”. I’ll be referring to subjective human professional judgement that you may not yet have access to. I’ll probably need to write a dedicated guide to software engineers early in their career.

Overture

In 1907, Einstein proposed a thought experiment for the equivalence principle between gravity and acceleration: Imagine you are standing inside a windowless elevator. How can you tell if you are on Earth, being held down by gravity, or in a spaceship accelerating away from your feet? Or flip it around, and you’re in zero-G. Are you floating in space, or in free-fall, about to meet the Earth at an unfortunate speed? You can’t tell.

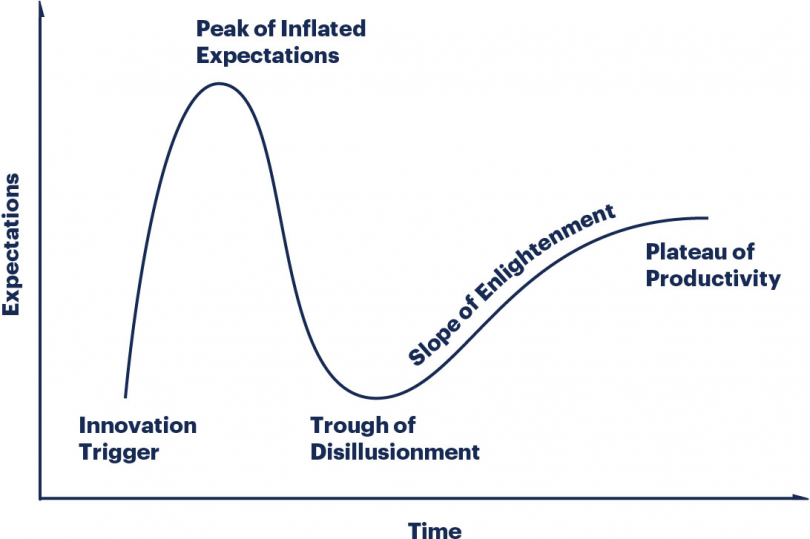

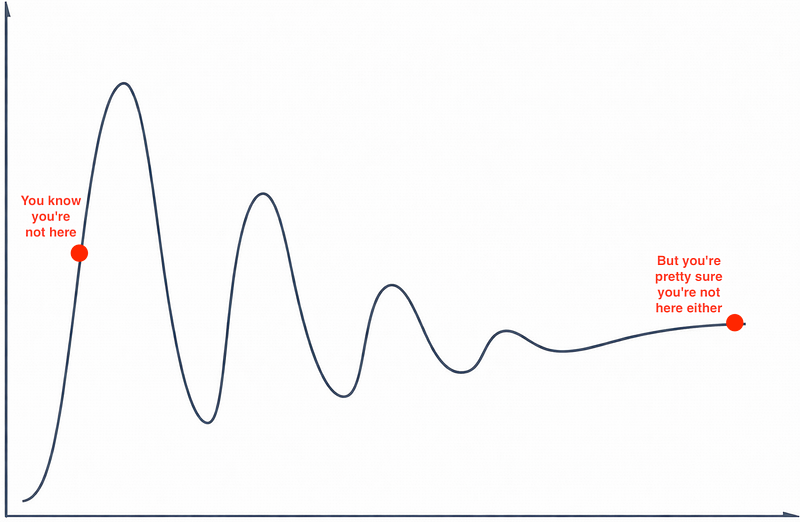

I often think about this principle when I see the “Gartner Hype Cycle”. How do you know if you’re on the first upward climbing slope or the second?

Especially when the actual curve/cycle looks more like this:

Intermission

As a society we are going through an attention span crisis, and I am a well-known yapper that prefers the long version of every story. This is a dangerous combination. I promised you practical advice on agentic software engineering, and I am not a liar. But I’m also not known for building in public. My name on a blog isn’t enough to give credibility to any advice I offer. I’ve spent the last 13 years at Amazon Web Services working on services you know, but you've never seen my code. So, I want you to understand where I started from, and where I ended up, so you can take both my advice and my warnings seriously. I have nothing to sell to you (well, at least not in the AI space). But I need you to understand me.

Me

I started my first full-time post-university job as a software developer in February 2006. In that time, I watched multiple “once in a generation” technical breakthroughs/mental model shifts, from Web 2.0/Social, to Mobile, to Cloud. And I’ve got to experience a few cynicisms of things that people said were just misunderstood and are waiting for their big moment, but never made the same impact as those earlier bits, from crypto/blockchain, to AR/VR. I went from a small Vancouver-based startup, to Big Tech (working on EC2, EventBridge, and Lambda, eventually as a Principal Engineer), and now back to a small Vancouver-based startup (Safety Cybersecurity, focused on securing the software development lifecycle).

I don’t think I have particularly prescient judgement. I’ve been wrong about a lot of things, and late to the party on even more. But I do have a strong sense of pragmatism, integrity, and the ability to untangle a mess of ambiguous vibes into concrete insights. In my pretty pragmatic peer group, I’ve seen broad consensus on the things that never got past their Peak of Inflated Expectations (*cough* blockchain), and hot debate on stuff on the Plateau of Productivity (e.g. Kubernetes vs Serverless). I have never seen a divide in my community as large as I do today on the state of AI-assisted software development.

6 weeks ago I started a new job, firmly in the trough of disillusionment, with the intention of properly figuring out if I deserve to be there. You might be able to guess what happened next.

AiDHD

If you ask any manager I’ve ever had, they would probably tell you Nikki has Pathological Demand Avoidance and is “high-maintenance” to manage. I thought I was doing better as my 30s turned to 40s1. That changed in 2025.

Like everyone else, I observed with excitement as chatbots evolved from Eliza to ChatGPT in my career. I loved Deep Dream, I mocked Will Smith’s spaghetti, grew excited for image generation, then just as quickly concerned over the devaluation of artistic creativity gleefully championed by my industry. It was clear enough that there was something there to these LLMs, but the benefits came with so many compromises. Awkward browser chat tooling, bland empty impersonal writing, repetitive art styles, and endless hallucinations. I had Copilot, then ChatGPT write some one-off scripts, or unit tests, and they were OK. Friends and coworkers who were ahead of me on the curve left and started their own AI coding startups (hi Jakub), but I just didn’t feel the value was there for me.

So when I started feeling the top down pressure to embrace AI tooling in my development in the summer of 2025, I did not handle it well.

“Don’t you know we have Distinguished Engineers who are vibe-coding in meetings with VP’s? What’s your excuse?”

This came from a trusted leader & friend. It sounds worse on the page than it was in person. We were joking around. He was excited about the changing landscape, and the opportunities for busy Principal Engineers like myself who spent 90% of my day in meetings and document reviews, to code more. It was well-intentioned. But it was also a wind change. The company did want me coding more. They wanted me to use AI tooling more. And they trusted me (and my peers) less to make that decision for myself.

I said all the clichés (and recent truths). It doesn’t work. The hallucinations are too irritating. It doesn’t write good code. I have to spend more time fixing problems than if I just did it myself the first time. I’m a professional, I’ll pick my own tools, don’t tell me how to do my job. This should be something decided bottoms-up, not top-down2.

I wasn’t wrong. I wasn’t exactly right, either. I was in the trough of disillusionment.

The Cracks

If you follow AWS and you watched the 2025 re:Invent keynote, you saw CEO Matt Garman talk about Kiro, AWS’s AI IDE and CLI3 and it’s recent launches. This part of the keynote culminated in a story by an AWS Distinguished Engineer using these tools to essentially do a bottoms-up rewrite of a substantial piece of Amazon Bedrock in just a few months with a small tiger team. Now, I could nitpick (and I did at the time) the marketing veneer that twisted reality to fit the keynote narrative, but the core essence of the story was true, and impressive. Remember, people have already been talking about peeps from team coding (not vibe-coding4, incidentally) in meetings for months. A small tiger team of highly competent AWS engineers took a core piece of AWS infrastructure (inference job scheduling for Bedrock), and rewrote it from scratch, entirely using LLM coding tools, in essentially weeks5. And then that same DE did a company wide tech talk to explain what he had learned. To say that this talk caused quite a stir would be an understatement. This was a genuine technologist, sharing valuable insights, the biggest one of which probably forms the most essential piece of advice I have in Part 2 of this series. And he was sounding the alarm bell that everyone needs to figure out how to get there to be able to do the same on their teams, or be left behind.

This is a very charitable summary of the talk. I had and have a lot of problems with it too, and grave concerns of Amazon at large to implement most of its ideas at scale. But more to our point today, I want to remind that I heard it from the depths of cynicism and disillusionment. I was in no way convinced. But I was also struck by the part of the talk that essentially said: Learning to use these tools effectively is like learning a new language (he did not say programming language). You must immerse yourself in them. And it takes time. Months. I knew I needed to find out for myself.

My Google Maps Moment

If you’re under the age of 35, you don’t really understand the impact Google Maps had on the tech world in 2005. Everyone who reminisces for that era of Google products always brings up Gmail, because it’s a more accessible story - built as someone’s 20% time project, launched on April 1st, with 1 GB of storage (500x more than Hotmail did at the time). And yeah, invites for that were going like hotcakes. But you don’t understand the shockwave that shuddered through every techie the first time they clicked zoom on Google Maps, and the map zoomed, and the browser page didn’t reload.

How did it do that.

If you weren’t there, the closest I can show you what that felt like is that crowd reaction to Steve Jobs pinch-zooming the first iPhone. You have to remember, Google didn’t have a browser then. Browsers were just dumb clients requesting HTML from servers, drawing it verbatim. JavaScript was a toy, mocked universally. AJAX, the magic behind that Google Maps zoom (concretely the XMLHttpRequest object), had been around since 1999. Google didn’t invent AJAX. But they figured out a way to use it to create a new type of website, that changed what a “website” meant for the next 20 years.

This is also how I felt after my first full week of using Claude Code in January 2026. The shudders weren’t instant. But there were multiple of them. And they kept building. While I kept building features, implementing a 30,000 LOC feature in a month that should’ve taken me 6. That’s rookie numbers for vibe coders, but I was not vibe-coding (see footnote 5 again). Every line of code was reviewed by me before it was approved by others (because it’s rude to show AI output to people before you do yourself). It was also my first month at my new job.

Here is where things get tricky. I would be wasting both of our time if I now left you with some “Unfortunately, noone can be told what (building with claude) is. You have to see it for yourself.” meme. But I have an idea.

In Part 2, I’m going to tell you about the mental shift I underwent during that first month of full-time Clauding. How I learned that the classic complaints of coding with LLMs can be essentially solved - if you know how - and I’m going to teach you. How I was so worried that I was going to be infuriated watching my agents make the kinds of mistakes even interns don’t . That I would be forced to abandon my job as a craftswoman, and become a manager of brilliant morons with no memory and no ability to learn. And how I quickly found joy (and the ability to teach) my tiny swarm of agents. By the way, as I write this in February 2026, clever people I trust are already saying OpenAI’s Codex 5.3 has lapped Claude and is better. We’re going to understand in Part 2 why that is super subjective, but ultimately doesn’t matter. The arms race will continue for some time. The lessons and advice will carry over until they won’t.

We’re going to start with the idea that this job isn’t software engineering anymore. Nor is it “prompt engineering”. It’s a game of resource management.

Footnotes

(tip: click on footnote to go back to where you were reading)

1 PDA, now more commonly referred to as Pathological Demand for Autonomy, is a very common affliction of the neurospicy folk. Becoming a mom and raising a child confronted me with a preschool-aged version of myself, with none of my skills or ADHD pills, and 10x the willingness to scorch the earth over being told what to do. This taught me a lot of humility and chilled me out on a lot of other subjects of stuff outside of my control, most notable Petty Hills To Die On At Work. I used to have a lot of those, but not nearly as many in the 2020s. Independently from all this AI mumbo jumbo, if you struggle with ADHD & Autism in the tech industry, I also wrote The Neurodivergent Big Tech Survival Guide.

2 It didn’t help when an edict came down from SVP leadership, that all developers at the company must adopt Amazon’s own kiro over all others (codex, opencode, claude). I was experimenting with all of them at the time, and the last thing I wanted to do was use the only one I was allowed to, even if it was good enough. I understood why strategically it was the right decision for the company, but it also told me and every other engineer not on that product “Your work is less important than our AI strategy”.

3 Kiro IDE was always Kiro IDE (a spec-driven VSCode fork ala Cursor). But Kiro CLI was Q CLI, which was CodeWhisperer CLI. Underneath, it’s all Anthropic, and AlwaysHasBeen.ohio.

4 The English language evolves outside faster than anyone is ever happy with, but at time of writing, the term “vibe coding”, as Karpathy intended means using AI to create software without looking at the code it generates. You know there’s code, you know you’re source controlling it, compiling it, testing it, and deploying it. But you’re not reading it or trying to understand it, because it doesn’t matter. In contrast, if you’re using AI to generate code, but you are ensuring to read it and understand it, because you’re going to maintain and operate it, that ain’t vibe-coding. It can still be massively AI-assisted, and Simon Willison calls it vibe engineering. I personally love this term, I think it’s cute! (a vibe, even…). Most of my peers disagree. Oh well.

5 The caveats to the story are, for the record: It was (is?) a team of some of the most experienced AWS engineers around — deeply technical and credible people; who basically stopped going to all other meetings; stopped doing other ops work (except customer incidents for the service they were rewriting, smart); sat in a co-located space so they could talk architecture all day; and followed some unorthodox code review practices.