Close enough for jazz: A Practical guide to Agentic Engineering Part 2

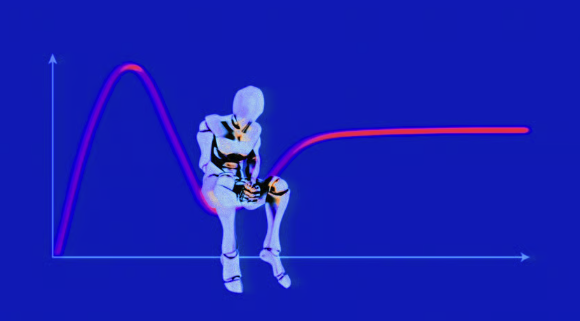

Prompt Engineering is dead. Long live Context Engineering

NOTE: This is Part 2 of a 3-part series on my education with AI-driven software development. Part 1 is the why. This is the how. Part 3 is the specific whats. Footnotes are clickable. I feel obligated to add: this was written by a human, for other humans. If your instinct is to ask an AI model to summarize this post, it is not for you.

It only has 3 buttons, how hard could it be

When I was starting High School, I had to take a mandatory arts elective, and I wasn’t very happy about it. Today, at 40, I have a rich appreciation for all sorts of creative outlets, and see my software development profession as one. But heading into grade 8 in 1996, I was consumed entirely by math science and computers, and didn’t think much of a choice between painting, woodworking, or music. I wanted to pick the easiest option in the easiest class I could imagine, so I could blow it off. I chose music, and I chose the Trumpet.

Some of you are already laughing, but I was 11, and not exactly brimming with humility. I truly thought “It has the least amount of buttons, so it’s going to be the easiest. After all, there are also a finite amount of notes.” Almost 30 years later, I still love hearing the trumpet, especially mixed with electronic music (any brass instrument, really), but my Trumpet career didn’t even make it to grade 9. It turns out that knowing which music note to play, and memorizing which button combination makes it isn’t actually enough to get a competent result out of your instrument.

This is the first thing to internalize when trying to get productive with agentic engineering. The essential skills are not part of the API contract. “Just tell the agent what you want it to code, and watch it do it” is the Trumpet and its three buttons (they’re called valves, but I don’t even know if I learned that before I gave it up). There’s a bit more to it than that, and it needs practice.

The second thing to absorb is that getting good at this, just like skill of making music beyond theory is fuzzy and analog. “To make a B sound, press the 2nd valve” is deterministic digital knowledge. “Vibrate your lips at just the right speed” is an analog skill. Software development used to be more predictable1. You learn the imperative commands of an API surface (for the vast majority of engineers, a programming language). You learn their inputs, outputs, costs, and side effects. Then you compose them into increasingly complex abstractions that can solve increasingly complex problems. This has been true from NAND gates to python package, and everything in between. No more. Skillful agentic engineering is analog. We’re going to explore shortly how we can wrestle back some measure of predictability for our toolchain, but even so, I want you to really pause and accept that the success criteria isn’t perfection. It gets generally less fuzzy with every new LLM generation and coding harness (claude code, codex are harnesses) update, but in a two steps forward one step back kind of way. Basically they’re making new and better Trumpets every month now, and your skills mostly transfer (if you internalize these lessons), but you must plan to be a bit flexible.

The third insight is that coding has always been like music, and it is only even more true now: There Is More Than One Way To Do It. With the explosion of new tools and techniques, everyone is asking what is the right way of coding LLMs, or what is the best. Fundamentally, that is like asking what is the best IDE. You can learn from me, Mitchell Hashimoto, Boris Tane, Alex Wood, Simon Willison, Steve Yegge, this guy (wish i knew his name, great guide), or all of us. You will discover your own style. Just as long as you understand your primary objective: resource management.

Settlers of Claudtan

Our industry has been derisively waiting for software engineers to get replaced with prompt engineers since 2023. We now realize that wasn’t quite right - crafting a good prompt is less and less important. Effectively managing your agentic context is now the main job- the tools, skills, scope, and state. Hallucinations are dramatically reduced, so long as you practice good context discipline on all of these. The subtle ones - the ones that sound right and survive your review - are a different story, and we’ll get to those in Part 3. Your agent harness can actually look around corners of an incomplete plan, even argue with your bad or short-sighted ideas, but only as long as you’ve left enough space in your context window. None of this changes with every generation of models and larger windows, only the horizon of your sessions.

Context rot is the biggest risk to a successful plan execution. And the problems appear a lot sooner than you think. By the time your agent offers to auto-compact, it is far too late. You have to be following SCUBA practices -half of your air tank is for your buddy, and half of your context window is for hallucination-prevention. Nitrogen Narcosis makes SCUBA divers chase clownfish until their air runsout, and Context Rot makes LLMs forget to write unit tests, and worse. But noticing it, and stopping it it’s not an exact science, so the learning curve feels like no other framework or programming language. It feels like developing a new sense, emotional as much as technical. You’re going to pass through three specific moments, and how you respond to each one determines whether you actually git gud, or get frustrated and write on LinkedIn about how AI is overhyped.

The Three Trials

Delight

You will need to push your LLM harness. If, after a week or two, you haven’t had a moment where the agent genuinely exceeds your expectations, you’re not being ambitious enough. You won’t find the limits of possibility until you prompt for something too ambiguous, complicated, or esoteric, out of laziness, and get delighted. Give it way too much context and watch it untangle the nuance. Paste 3 pages of messy stakeholder requirements and meeting notes, and watch it come back with 16 numbered architectural decisions, each with alternatives considered and rationale, and a set of questions about things you didn’t even consider.

Then try something adversarial. Ask it to write a security review of it’s own design, clear the context, and tell it to be adversarial with itself. Use your anxieties as test cases. If you’re worried it won’t write enough tests, ask it to write more tests. If you’re worried those tests will be redundant assertions of their own setup - tell it that, specifically. If you’re worried about cognitive debt piling up while you lose track of what it’s generating, ask it to teach you as it goes. Give it a screenshot with a bug without saying where it is. Drop in a failed build URL with no other instructions. The delight isn’t that it always succeeds. It’s that it succeeds at things you wouldn’t have bothered trying before.

Here's one that I tried this week to monumental success:

There was a deployment today: <link to GitHub action>. During that time there was a much bigger availability drop and latency spike than I would've expected: <screenshot of dashboard> Use the AWS CLI and check CloudWatch, ECS, etc, and root-cause it.

Betrayal

Cursed, sudden, and inevitable. You will follow every best practice, and 99 runs in a row hand an agent 10 tasks and watch it nail all 10. The 100th time it’ll do 8, silently skip one, and hallucinate a confident explanation for why the last one was unnecessary. Welcome to non-determinism. This moment is essential - not to create cynicism, but to install vigilance. You need this betrayal the way every junior needs to break production. You’ll start spotting when the agent forgot to source your .env for the first time all week and is catastrophizing down a rabbit hole. Or when it decides that its own bug from earlier in the session is a "pre-existing problem" it doesn't have to fix. The practical response here is not to trust less, but to verify better.

Pride

Delight happens when the agent is autonomous and smart. Betrayal happens when the agent is autonomous and dumb. But what happens when it asks for help to make a subjective decision. Or even when it makes one, and you know it’s subjective, but disagree. There may be more than one way to do it, but that’s not the way you want to do it today. And you know why even if the agent doesn’t.

That’s when you feel some professional pride, and realize that this moment, this judgement, is why you - a professional with skill, experience, and taste, still matters to the loop of software creation. Getting smarter doesn’t matter when all the remaining choices are subjective and human. The discrimination between when to let go and when to intervene is itself a new skill. It’s the one that separates vibe engineering from vibe coding.

With all that said, let’s build the discipline that makes it all work anyway.

M̶e̶a̶s̶u̶r̶e̶ ̶t̶w̶i̶c̶e̶ Plan five times, c̶u̶t̶ implement once

Every MCP, every plugin, every skill, every RAG, every bit of prerequisite understanding you want your agent to start with uses context. If you want it to keep in mind most of your codebase, and it’s a bit monolith-y, and you’ve excitedly installed the MCP for every tool you’ve ever thought of using, you might hit that 50% mark on your context window before any useful work. That means from the start, you need to be intentional about what tools you load, operate defensively about what you might have to do if you get stuck in a dead end, and need a fresh tank of oxygen, err a clear session context. And if you, like most engineers, hate the idea of people (and bots) you work with making the same mistake twice, or having to be told the same thing over and over, you need to give your agent memory. Here’s what this means, in order of descending importance

Always have a durable plan. This can be claude’s plan mode, it can be an openspec (my preference), or anything else you prefer, but the flow goes Plan, Plan, Plan, Plan, Plan. (Clearing your context each time it needs to be). Then implement. (we’ll get to that). Don’t be too proud to ask your agent to take the plan it gave you and Do Better. Give specific feedback, sure. But don’t be shy to just tell it to rewrite it 2 or 3 times before you even look at it2.

Build stepping stones. If the agent is going to scan through your codebase to get a sense of your DB model, get it to output it to an artifact. Now the next human in the repo benefits, and the next agent run needs fewer context budget to catch up to the same level of understanding. (we’ll talk later about how to deal with divergence). If you run a code review agent, get it to output it’s findings to your plan’s task list, or an issue manager. Other stepping stones are codebase maps (e.g. with cartographer), data model docs, and repo-specific skills. We’ll explore each of these in Part 3, but for now just remember that separation is important and not to dump everything into one AGENTS.md.

Learn which tools you need when. If you’re planning and need to poke around your company’s existing observability (or if you’re working on a production issue), have the sentry CLI and MCP enabled. Otherwise, don’t bother. AI agents love CLIs, so if your SaaS vendor has one, err on the side of that, plus their MCP.

Etch lessons into stone. Every time an agent does something stupid, illogical, unpredictable, expensive, or just needs a lot of guidance, write it down. It could be a skill, a command, the repo’s AGENTS.md, or your own, but it needs to go into the cultural memory of not just your next agent run, but your teammate’s in the same repo. An AWS hero once said “Never complain about AWS somewhere where AWS can’t hear”. The same is true with LLM coding agents. This applies to cognitive debt, too. If you feel your cognition of the code slipping, pick an explanatory style, or directly ask the agent to generate more explanations.

Subagents aren’t even cattle - kill them without a second thought. Short lifecycles - one task to implement, or review, log some state, die. And do make sure that these are subagents - they get their own context window. However most agents seem to like to give sub-agents inferior model access by default. Override that. Don’t compromise on reasoning, always pick the highest level reasoning options. That goes double for code review agents - always ensure they have a clean context. You don’t need fancy adjudication between different LLM providers. Just the same smartest model with a clean slate and no bias.

You need to try this all for yourself, and don’t be afraid to ignore some of this advice (I have). Don’t be shy about getting lazy (I have). The shortcuts you take to see how far you can push it, will teach you a sense of the limits. You’ll develop a sense for when it’s OK to launch directly into Accept-All-Edits mode, and just tell the agent what to do. And when you need every phase of an openspec specification, only to tell your agent “implement the first task and stop” because you’re pretty sure it’ll be out of context then. You’ll experience that frustration as if you’re arguing with a child, then realize you’re at 80% context. No matter, those stepping stones we created are save points. If anything goes wrong, you don’t have to get too far.

But now, in Part 3, we’re going to talk about the differences between MCPs, Skills, and Commands. My recommended init setup for all codebases, what to do about UI development, what Claude Code continues to be terrible at, and two stories from my first month of PRs that taught me more about working with AI than everything in this post combined.

Footnotes

(tip: click on footnote to go back to where you were reading)

1 I can hear the objections. From undefined compiler behaviour, to hardware failures, to congestive system collapse, to cosmic-ray bit flips. I know. But you also know what I mean.

2 I walk through this workflow in full detail in Part 3, but the short version: dump context into a prompt, iterate on a plan until it stabilizes (clearing context each time), then implement task-by-task with short-lived agents that die after each unit of work. Review agents get clean context so they’re not biased by the implementation agent’s decisions.

References

- https://mitchellh.com/writing/my-ai-adoption-journey

- https://boristane.com/blog/how-i-use-claude-code/

- https://boristane.com/blog/the-software-development-lifecycle-is-dead/ (we’re going to talk more about this one in Part 3)

- https://alexwood.codes/agents/2026/02/12/ai-production-coding-workflow.html

- https://simonwillison.net/2026/Feb/23/agentic-engineering-patterns/

- https://simonwillison.net/2025/Oct/7/vibe-engineering/

- https://simonwillison.net/guides/agentic-engineering-patterns/interactive-explanations/

- https://steve-yegge.medium.com/six-new-tips-for-better-coding-with-agents-d4e9c86e42a9

- https://x.com/karpathy/status/2015883857489522876?s=20

- https://x.com/drampson11/status/2013637686045585841

- https://github.com/Fission-AI/OpenSpec/

- https://code.claude.com/docs/en/output-styles

- https://code.claude.com/docs/en/agent-teams

- https://arxiv.org/abs/2602.20478