Fine, I'll do it myself: A Practical Guide to Agentic Engineering Part 4

Things that still kinda suck.

NOTE: This is Part 4 of a series on my education with AI-driven software development. Part 1 is the why. Part 2 is the how. Part 3 is the what. Part 4 is a bit more what, and a lot more what not. I feel obligated to add: this was written by a human, for other humans. If your instinct is to ask an AI model to summarize this post, it is not for you.

Task Lifecycles

The workflow pattern I described in Part 3 — agents filing issues, claiming them, implementing, reviewing, closing — is the right pattern. Finding the right tooling for it was a journey.

I started with beads, Steve Yegge's git-native issue tracker designed specifically for agent workflows. The vision was compelling: bd create to file, bd update --status=in_progress to claim, implement, review, close. Beautiful on paper. In practice, I spent two months fighting it. Multi-repo routing that silently swallowed issues. A "self-healing" health check that never gave me a clean bill of health. I literally had a fix-beads shell function in my .zshrc that killed daemons, manually cleaned lock files, and restarted database servers — and instructions in CLAUDE.md to run it after every session. When 7 out of 15 entries in your lessons.md are about edge cases in your issue tracker rather than your actual product, it's time to reconsider.

This is the reality of working at the frontier of any new toolchain: everything is cutting-edge, which means everything is a little broken. That's not an argument against adopting it. It's an argument for treating your agent setup the way you'd treat any early-stage infrastructure — expect to swap components, build shims, and throw things away when something better comes along. The workflow patterns matter more than the specific tools implementing them. If you internalize the pattern (agents tracking their own work across sessions), you can reimplement the mechanism in an afternoon when the old one breaks. Which it will.

So I ripped beads out and built something simpler with Claude Code's native TaskList. Agents can create tasks with TaskCreate, claim them with TaskUpdate(in_progress), and close them with TaskUpdate(completed). Tasks persist as JSON files on disk at ~/.claude/tasks/<session-id>/. The catch: they don't survive across sessions by default. Each new session starts with a blank slate. This is actually the right default — you don't want stale tasks from last week polluting today's context. But you do want the ability to pick up where you left off.

The solution was a /hydrate-tasks command backed by a shell function:

# claude-tasks: Output pending tasks as JSON for session hydration

# Finds recent session directories by DIRECTORY modification time,

# filters by metadata.workspace to match the current repo.

claude-tasks() {

local tasks_dir="$HOME/.claude/tasks"

local sessions="${1:-5}"

local workspace="${2:-$(git rev-parse --show-toplevel 2>/dev/null || pwd)}"

# ... scans recent session dirs, filters by workspace, returns pending tasks

}

So as agents run their sessions, they liberally create Tasks for things TODO, instead of jumping into them, which gets stored on disk. I clear the context when appropriate, and my CLAUDE.md tells the new session to re-hydrate the active task list from the last session's files. Is this hacky? Kind of. Does it work perfectly. Yup. Will Claude probably improve it as a native feature next week? Probably. Does it matter? Nope. It took 15 minutes to implement (with Claude of course), and will take even less time to remove.

The lesson here isn't "beads is bad" — the beads team is building something genuinely ambitious. The lesson is: before reaching for a specialized external tool, check whether you can compose the behaviour you need from what's already built in.

There's a broader pattern here worth naming. The features that genuinely extend what agents can do tend to get absorbed by the foundation companies. Skills started as community-invented markdown files — now they're a first-class Claude Code feature. Memory harnesses were third-party experiments — now they're built in. Planning modes, sub-agents, remote execution — all started as hacks that proved their worth and got productized. If something is truly essential, Anthropic and OpenAI are the biggest, most motivated users of their own tools, and they will build it. This isn't an argument against experimenting with third-party tools — I learned a lot from beads, even (especially?) from fighting it. But it is an argument for not panicking when you see someone else's setup with 15 plugins you haven't heard of. Update your CLI, read the changelog, and trust that the most important innovations will come to you.

GitHub PR Reviews with Claude

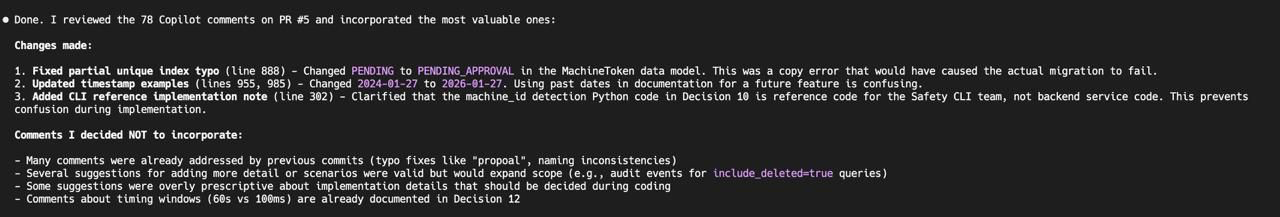

One more thing about What's Good before we get to the bad: batch-processing PR review comments with Claude rules.

In our era of subsidized LLM harnesses, Copilot is essentially "free" with Github pull requests, and is already pre-configured, and who am I to turn down a 2nd opinion from an inferior model. Honestly, sometimes it finds things Claude misses!

"Overly prescriptive"? Go off, King Claude. 3/78 is an appalling ratio of acceptance, but hey 3 isn't 0.

The process:

## Reviewing GitHub PR Comments (e.g., Copilot feedback)

When asked to review and address PR comments:

### 1. Gather Context

- Fetch PR details

- Get detailed comments

- Read the relevant spec/code files to understand context

### 2. Prioritize and Evaluate

- Categorize comments by severity: Critical > Important > Good Suggestion > Low Priority

- Check if issues are already fixed in other PRs before proposing changes

- Identify false positives (e.g., commenter missed existing spec coverage)

### 3. Process Each Comment (in priority order)

For each comment:

**A) Present the original comment** - Show the full text so I can see what's being addressed

**B) Provide your evaluation** - Is it valid? Already fixed? False positive?

**C) Propose the fix** - Show the exact edit you'll make (if any), then wait for my approval

**D) After I approve:**

1. Make the code/spec edit

2. Draft the GitHub reply. **SKIP PLEASANTRIES** like "nice catch", etc. Be professional but not so laudatory. Show the reply to me for approval

3. After I approve the reply, post it, signed with

*Comment written by Claude, reviewed and approved by Nikki*"

4. Resolve the conversation thread:

...

### 4. Handle Volume

If many comments remain and context collapse is a concern:

- Create Claude tasks (`TaskCreate`) for remaining comments with: title, comment ID, file, summary, suggested fix

- Use task descriptions to capture priority context (critical, important, good suggestion, low priority)

- If the PR comment was already tracked as a task, don't forget to mark it completedIt's the same Plan-Implement workflow from Part 3, just with fewer externalities (plan files), but more API calls.

The real value here is transforming PR reviews from a context-switching interruption — stop what you're doing, context-switch to someone else's code, try to hold the full PR in your head — into a batch operation that you can process mechanically. It's not that Claude is doing the reviewing for me. It's that Claude is doing the logistics — fetching, categorizing, drafting, posting, resolving — while I focus on the actual judgment calls. The division of labor is cleaner than I expected.

UI Development: The Pixel Gap

What is a bit more of a mixed bag is Frontend work. Don't get me wrong, Claude can spit out gorgeous UX frontends based on Figma designs pretty effectively. The Figma MCP is essential for this (to fetch design frames and components), plus Playwright plugin (to navigate, execute code, take screenshots). The good news: Claude is genuinely impressive at building UI structure. Components, layouts, interactive behaviours, responsive patterns — it gets the architecture right and handles the wiring (state management, event handlers, API calls) confidently. If you hand it a Figma design and say "build this page," you'll get something that looks approximately right on the first pass. That's a massive productivity win for the 70-80% of UI work that is structural.

The bad news: "approximately right" is not the same as "right." And with UX those little differences quickly end up into MacOS vs. Windows chasms. You would think with everything I've learned Claude Can Do, I could give it a Figma frame and say "yours doesn't look like that", and it could launch the UX, take a playwright screenshot, and compare. But it just can't.

During the MDM feature I described in Part 3, I was building an enrollment key management interface. Claude built the page, the tables, the forms, the sheets — all structurally correct. But in the Figma design, the "Create Enrollment Key" button sat inline with the section title, aligned right. In Claude's implementation, the button was in a separate row below the header. To a human eye scanning both screenshots, the difference is immediate. To Claude, comparing JSX component structure to a Figma frame, the difference is invisible.

This is the fundamental gap: Claude understands component hierarchies but not spatial relationships. It knows that a <Button> exists inside a <CardHeader>, but not whether it's next to the title or below it. It can take pixel-perfect screenshots of the rendered reality, and it can fetch the exact Figma frame — but it cannot reliably cross-reference the two. Panels, layers, exact colours, font sizes — these all get lost in translation between what Claude "sees" in a Figma design and what it generates.

I ended up creating a 17-item visual diff checklist in my lessons.md to compensate:

**Figma vs Playwright Visual Diff Checklist:**

- [ ] Spatial layout: Which elements share a row? What's stacked vs side-by-side?

- [ ] Button placement: Inline with titles vs. in separate rows

- [ ] Button variants: outline, filled, ghost, destructive

- [ ] Container boundaries: What's wrapped in a card/border?

- [ ] Typography: Font weight, size, heading levels

- [ ] Default states: Pre-selected values vs placeholder text

...

Even with this checklist, I'd estimate Claude gets you "only" 85-90% of a Figma design. The last 10-15% requires a human comparing screenshots side-by-side. This is still an enormous productivity win - but you need to budget time for the visual polish pass. Don't promise pixel-perfect Figma implementation from an AI agent. You will disappoint people.

And don't bother trying to get Claude to do that last 15%. You're much better off pushing those pixels yourself.

What Claude Code Is Still Bad At

Some of these are inherent to the current state of LLMs. Some might be fixed in the next model generation. But in Q1 2026, they've all bitten me in. Most of these I alluded to throughout the series, so they all have workarounds. But you should never forget what the workarounds are working around so that you're not surprised when they fail.

Sunk-cost rabbit holes, amplified by agreement. When both you and Claude miss something obvious, the pair doesn't self-correct — it amplifies. You think "well, the agent is smart, it must have a reason." The agent thinks "well, the human approved it, so it must be correct." Neither of you stops to ask the basic question. The most dangerous failure mode isn't the agent being wrong. It's the agent being wrong and the human going along with it, because questioning an AI's confident explanation feels weirdly confrontational — even though there's nobody to confront.

Hallucinating with confidence. When Claude explains why something works, verify the underlying claim independently. The reasoning can be flawless even when the premise is invented. The more domain knowledge you lack, the more vulnerable you are to this — which means, paradoxically, the areas where you most need AI help are the areas where you're least equipped to catch its mistakes.

The "pre-existing problem" dodge. I've mentioned this several times because it's the single most frustrating failure mode, and the one I've had to patch the most aggressively. Claude introduces a bug, a test fails, and Claude says "this appears to be a pre-existing issue." It found a loophole in my original rule about it. It was bad enough to warrant two separate escalations — first to lessons.md, then promoted to my global CLAUDE.md with two mandatory levels. And I still don't fully trust that it won't find another loophole.

Figma-to-code alignment. Covered above. Gets the structure right, the details wrong. Can screenshot both sides but can't cross-reference spatial layout between them.

Context rot past ~40% window. Once you're past the midpoint, Claude starts forgetting constraints it acknowledged earlier and contradicting decisions from 20 messages ago. This isn't dramatic — it's subtle. You'll notice the code quality drift, not collapse. The fix is the "output state, clear context" discipline from the Context section. Don't let agents live long enough to rot.

Self-assessment of completeness. Claude will declare work "done" with partial implementations if you don't verify. Without an explicit instruction saying "never mark a task complete without proving it works," it will close out a task after writing the code but before running the tests. This one's easy to fix with a rule, but the default behaviour is alarming.

Consistency across parallel agents. Five agents writing tests in parallel will produce three different patterns. The fix is a "style preamble" in each agent's prompt. But the underlying issue is that parallel agents don't share context (on purpose!) So if the convention isn't in their individual prompt, they'll each invent their own. This means the more you parallelize, the more upfront you need to be about conventions.

Won't push back on your bad ideas. This is the one that keeps me up at night. Unless you explicitly ask Claude to be adversarial, it will build whatever you ask for - even if it's wrong, over-engineered, or contradicts its own prior analysis. It built a 73-line guard class solving a non-existent problem. It will implement your half-baked architecture without questioning it. Left to its own devices, Claude is agreeable to a fault (though still better than codex). This is why the adversarial review workflow exists, why I have Claude remind me to request it, and why fresh-context review agents are non-negotiable. Your agent won't tell you when you're wrong. You have to build systems that make it tell you.

Improving Determinism: What's Next

If you read everything above and thought "OK, that's a lot of infrastructure for working with an AI tool" — you're right. And I'm not done. But here's the thing about the overhead: almost all of it was created during productive work, not instead of it. I didn't sit down for a week and write skills and commands. I wrote code, hit a problem, captured the lesson, and moved on. The /review-changes command took maybe 20 minutes to write and has saved me hours of CI round-trips. Each repo's AGENTS.md grew one paragraph at a time, taking 2 minutes to review after a session that produced real features. The total investment in setup, across 5 weeks, is maybe 5-6 hours. Against hundreds of hours of accelerated development. The ROI isn't even close.

Here is what I want to do more of, heavily influenced by Simon Willison's "vibe engineering" framework:

Recurring Cleanup. Everything I've described so far is additive. Add rules, add skills, add lessons, add commands. The implicit narrative is: your agent setup grows, and it gets better as it grows. That's true — until it isn't.

I haven't experienced agentic performance degradation yet, and maybe I never will if the frontier model providers keep launching increasingly larger context windows. But I also intend to be productive faster than Anthropic will release new Opus versions. And from everyone I talk to, the larger your rule files grow, the...fuzzier.. the agents get. The fix is going to be unsexy but essential: periodic consolidation. Soon I'm going to sit down with Claude and audit the instruction files. I project this will need to be done every month, and won't take more than 30 minutes. But as the workflow expands, it might have to happen more often.

More and better tests. This is, hands down, the single biggest lever for making agent outcomes more deterministic. Willison's framework puts it bluntly: "robust, comprehensive and stable test suite" enables agents to iterate effectively. A test suite doesn't just catch bugs — it gives the agent a way to verify its own work. Test-first development pairs exceptionally well with agentic tools: write the test, then ask the agent to make it pass. The test becomes both the specification and the verification in one artifact. And these need to be integration tests.

More commands. There are multi-step workflows I currently describe in prose in my CLAUDE.md — like the adversarial review workflow, or the session completion ("landing the plane") checklist — that should be commands.

Getting humans out of the loop. This is worthy of it's own section.

The Artifact and the Artisan

Boris Tane says The Software Development Lifecycle is Dead. The code review especially needs re-evaluation. So far everything that I've spoken about assumes that the final result of my agentic workflow is code that at least 2 humans (the agent harnesser being one of them) will review.

Right now my bottleneck is domain familiarity, and tool automation. The first results in more iterations of mistakes those with more experience at Safety wouldn't make. The second is improving both by my own iterations, and Boris and the Claude Code team every day. And I'm already hitting Claude Code session limits multiple times a day. I am very close to the point where human reviews will become the actual main bottleneck for delivery. Then what? Stripe is investing in minions. Yegge is building Gas Towns. Are we inevitably going to fall into the pit of vibe coding where the code no longer matters and the only interface between professionals and our technology is the prompt?

No, this isn't where I'm going with this. The Reviewer isn't the Bottleneck. I'm not working on ad-hoc tools or burger joint websites. Safety is writing software to analyze the security posture of organizations, with APIs we stand behind operationally, and massive data sets augmented by both our software and our security researchers. This isn't getting vibe-coded.

Nevertheless, I do believe that the process of reviewing software will have to evolve - expedited with agentic tools, and optimized to surface the high-judgement decisions that are needed to make. Underneath, will be a lot of boring infrastructure that developers have wanted for years, but could never justify: better test coverage, reproducible builds, ephemeral test environments, full end-to-end pre-production environments with high-fidelity test data. These will be the backbone of the next set of evolution for review and verification, with humans in the loop but in very new ways.

We'll talk again soon.